Procrastination, or ‘the action of delaying or postponing something’ is a widespread habitual weakness common to most people in the world (unless you are super human, then this article does not apply to you). Specifically, it involves the pursuit of activities that provide short-term gratification while simultaneously delaying any task which is particularly laborious or unpleasant. Procrastination often manifests as a coping mechanism for dealing with pressure and anxiety surrounding personal trials; for example revising for exam s or writing a novel. Although procrastination has acquired the characteristics of laziness, avoidance and sloth; stressed out students may now take heart to learn that scientists are attempting to understand the neurological and psychological underpinnings of task avoidance and whether it may actually confer cognitively beneficial effects on intelligence, creativity and development.

s or writing a novel. Although procrastination has acquired the characteristics of laziness, avoidance and sloth; stressed out students may now take heart to learn that scientists are attempting to understand the neurological and psychological underpinnings of task avoidance and whether it may actually confer cognitively beneficial effects on intelligence, creativity and development.

From a psychological perspective, procrastination is a problem of self-control which leads the procrastinator towards behaviours that provide short-term relief by making a stressful or boring task immediately avoidable. Task avoidance may be easier to understand if we look at another model of self-control, for example, in a dieter. Before going to a restaurant the dieter may be set on not ordering dessert but, once the time comes, they may give in to the lure of a moist sticky toffee pudding. Inevitably, following this decision the would be dieter is likely to regret their choice and may be racked with feelings of guilt and self-hatred. This behaviour stems from a disproportionate preference for immediate gratification (a sugary snack) over, more beneficial, long-term rewards (better health) and is known as ‘systematic preference reversal’. These brief but powerful lapses in self-control govern the brain’s preference for behaviours that provide instant gratification and avoids pursuing goal-directed achievement.

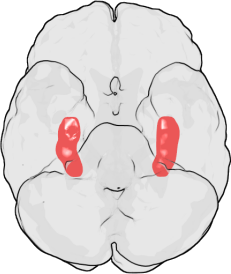

Procrastination on a neurobiological level actually appears to be emotionally driven, stemming from an internal desire to protect ourselves from negative feelings associated with the fear of failure. The amygdala is a brain region which has been associated with mediating a diverse number of normal behavioural functions including fear, emotion, memory and decision-making and is also involved in a number of psychological disorders including anxiety and phobias. This complex aggregation of brain cells has also been implicated in the neurobiology of procrastination, due to its role in establishing what is known as the ‘fight or flight’ response. This physiological reaction is most commonly linked to situations involving a threat to survival, but has also been applied as an explanation for task avoidance and procrastination. When we start to feel emotionally overwhelmed by an activity that is particularly challenging, or by the accumulation of multiple demanding projects, the amygdala induces this fight or flight emotional reaction in an attempt to protect us from negative feelings of panic, depression or self-doubt. When the amygdala detects a threat, i.e. when you begin to panic about how much you have to do before your exam tomorrow, it floods your system with the hormone adrenaline. Adrenaline can dull the responses of brain regions involved in planning and logical reasoning, leaving you at the mercy of more impulsive brain systems which may convince you that sitting on facebook for the next few hours is really not such a bad idea, even though you have an exam tomorrow. Short term gratification, can immediately relax us and improve our mood via the production of the neurotransmitter dopamine. Dopamine has a major role in reward-motivated behaviour, indeed, most behaviours that make us happy increase the levels of dopamine in the brain. This is when emotional memory comes into play. Specifically, because the brain will stimulate you to repeat an activity that has increased your happiness (and thus dopamine levels) in the past. Therefore, the amygdala encourages you to pursue such behaviours, despite the seemingly short-term advantages of doing so.

Research by Laura Rabin of Brooklyn College also implicates frontal regions of the brain, involved in what is known as ‘executive functioning’, in the induction of procrastination. Executive functioning is a term that encompasses a number of different processes, including problem-solving, changing actions in response to new information, planning strategies for approaching and completing complex tasks and most importantly regulating self-control of our own emotions, behaviours and cognition. Despite only demonstrating a correlative link (i.e. it is unclear if changes in executive functioning directly cause procrastination), this study suggests that procrastination may emerge as a result of a dysfunction of the executive function producing systems of the brain.

But does procrastination deserve its bad reputation, or may task avoidance actually confer some benefit? Some studies suggest that day dreaming, a well-known form of procrastination, may in fac t be beneficial in terms of the developing mind. Research, by Daniel Levinson of the University of Wisconsin-Madison, demonstrated that children who are regular daydreamers actually have better ‘working memory’, i.e. the ability to juggle multiple thoughts simultaneously than their less dreamy counterparts. Working memory capacity has been positively correlated with reading comprehension and even IQ score, and may represent a cognitive advantage in the ability to handle multiple complex thought processes all at once. Further to this, daydreaming and procrastination have been argued to be beneficial as a type of rest for the brain. However, these potential benefits remain highly controversial, and are obviously subject to individual trait and personality differences.

t be beneficial in terms of the developing mind. Research, by Daniel Levinson of the University of Wisconsin-Madison, demonstrated that children who are regular daydreamers actually have better ‘working memory’, i.e. the ability to juggle multiple thoughts simultaneously than their less dreamy counterparts. Working memory capacity has been positively correlated with reading comprehension and even IQ score, and may represent a cognitive advantage in the ability to handle multiple complex thought processes all at once. Further to this, daydreaming and procrastination have been argued to be beneficial as a type of rest for the brain. However, these potential benefits remain highly controversial, and are obviously subject to individual trait and personality differences.

So, if you are reading this instead of revising, writing your dissertation, or composing a report, don’t despair. Neuroscience offers us several ways we can tackle our tendency to procrastinate.

As procrastination stems from a split second emotional reaction that represses our reasonable thought processes (i.e. to be productive) and yearns for a happiness boost, we need to train our brains to see the completion of a task itself as the dopamine-producing experience rather than the procrastination. So, we should be focusing on rewarding ourselves for completing steps of a challenging project, rather than punishing ourselves for not getting it done. If a reward (no matter how small) is in sight, the amygdala, and the rest of your brain will encourage working towards receiving that reward, and will therefore be less likely to initiate behaviours that will distract you from achieving your goals. As dopamine release is triggered by things that makes us happy, dividing your work into small sections with a reward at the end of each step may be beneficial, so for every chapter you read or 100 words you write, maybe reward yourself with a YouTube clip of a cat singing, or a sneezing panda?

Another key element of procrastination is that we often set ourselves unrealistic goals, and when we fail to complete these we panic – and so does the amygdala – setting in motion a series of neurochemical reactions that will, quite literally, stimulate us to do anything else but the task at hand. So, throw those unrealistic goals out the window, break your work down to smaller manageable pieces and make sure you reward yourself each time you complete one. Finally, if you start to panic about the enormity of the task ahead, stop, take a breather, and give your rational mind the time to remind you that there is light at the end of the tunnel and the less you avoid it, the faster you will get there!

Another key element of procrastination is that we often set ourselves unrealistic goals, and when we fail to complete these we panic – and so does the amygdala – setting in motion a series of neurochemical reactions that will, quite literally, stimulate us to do anything else but the task at hand. So, throw those unrealistic goals out the window, break your work down to smaller manageable pieces and make sure you reward yourself each time you complete one. Finally, if you start to panic about the enormity of the task ahead, stop, take a breather, and give your rational mind the time to remind you that there is light at the end of the tunnel and the less you avoid it, the faster you will get there!

Post by: Isabelle Abbey-Vital

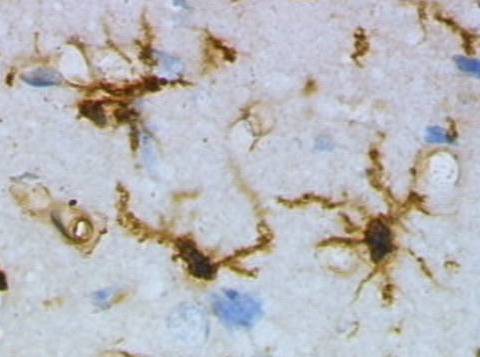

Resting (top) and activated (bottom) rat microglia after a brain injury. Images by Grzegorz Wicher (Wikicommons).

Resting (top) and activated (bottom) rat microglia after a brain injury. Images by Grzegorz Wicher (Wikicommons).

There are, however, candidate techniques that could be improved or perhaps combined. Imaging techniques, including optical,

There are, however, candidate techniques that could be improved or perhaps combined. Imaging techniques, including optical,  I see the BRAIN initiative as a very worthy cause, a good example of aspirational ‘big science’ and a great endorsement for future neuroscience. One gripe I have with it, however, is that it seems a little like Obama’s catch-up effort in response to Europe’s Human Brain Project (HBP). The HBP involves 80 institutions striving towards creating a complex computer infrastructure powerful enough to mimic the human brain, right down to the molecular level. Which begs the question: surely in order to build an artificial brain you need to understand how it’s put together in the first place? I really hope that the BRAIN initiative and Human Brain Project put their ‘heads together’ to help each other in untangling the complex workings of the brain.

I see the BRAIN initiative as a very worthy cause, a good example of aspirational ‘big science’ and a great endorsement for future neuroscience. One gripe I have with it, however, is that it seems a little like Obama’s catch-up effort in response to Europe’s Human Brain Project (HBP). The HBP involves 80 institutions striving towards creating a complex computer infrastructure powerful enough to mimic the human brain, right down to the molecular level. Which begs the question: surely in order to build an artificial brain you need to understand how it’s put together in the first place? I really hope that the BRAIN initiative and Human Brain Project put their ‘heads together’ to help each other in untangling the complex workings of the brain. Savant syndrome is an incredibly rare and extraordinary condition where individuals with neurological disorders acquire remarkable ‘islands of genius’. What’s more, these ‘superhuman’ savants may be crucial in understanding our own brains. ‘Savant’, derived from the French verb savoir meaning ‘to know’, is a term to describe those who suffer from a condition that often has an profound impact on their ability to perform simple tasks, like walking or talking, but show astonishing skills that far exceed the cognitive capacities of most of the people in the world. Autistic savants account for 50% of people with savant syndrome, while the other 50% have other forms of developmental disability or brain injury. Quite remarkably, as many as 1 in 10 autistic people show some degree of savant skill.

Savant syndrome is an incredibly rare and extraordinary condition where individuals with neurological disorders acquire remarkable ‘islands of genius’. What’s more, these ‘superhuman’ savants may be crucial in understanding our own brains. ‘Savant’, derived from the French verb savoir meaning ‘to know’, is a term to describe those who suffer from a condition that often has an profound impact on their ability to perform simple tasks, like walking or talking, but show astonishing skills that far exceed the cognitive capacities of most of the people in the world. Autistic savants account for 50% of people with savant syndrome, while the other 50% have other forms of developmental disability or brain injury. Quite remarkably, as many as 1 in 10 autistic people show some degree of savant skill.

Leslie Lemke was born with cerebral palsy and brain damage, and was diagnosed with a rare condition that forced doctors to remove his eyes. Leslie was severely disabled: throughout his childhood he could not talk or move. He had to be force-fed in order to teach him how to swallow and he did not learn to stand until he was 12. Then one night, when he was 16 years old, his mother woke up to the sound of Leslie playing Tchaikovsky’s Piano Concerto No. 1. Leslie, who had no classical music training, was playing the piece flawlessly after hearing it just once earlier on the television. Despite being blind and severely disabled, Leslie showcased his remarkable piano skills in concerts to sell-out crowds around the world for many years.

Leslie Lemke was born with cerebral palsy and brain damage, and was diagnosed with a rare condition that forced doctors to remove his eyes. Leslie was severely disabled: throughout his childhood he could not talk or move. He had to be force-fed in order to teach him how to swallow and he did not learn to stand until he was 12. Then one night, when he was 16 years old, his mother woke up to the sound of Leslie playing Tchaikovsky’s Piano Concerto No. 1. Leslie, who had no classical music training, was playing the piece flawlessly after hearing it just once earlier on the television. Despite being blind and severely disabled, Leslie showcased his remarkable piano skills in concerts to sell-out crowds around the world for many years. What is important to consider is that not all savants have developmental neurological disorders. The syndrome does sometimes emerge as a consequence of severe brain injury. Orlando Serrell is an ‘acquired savant’ who at 10 years old, was violently struck on the left hand side of his head by a baseball. Following the incident, Orlando suddenly exhibited astonishing complex calendar calculating abilities and could accurately recall the weather of every day since the accident. Orlando’s case and others alike imply the intriguing possibility that a hidden potential for astonishing skills or prodigious memory exists within all of us, expressed as a consequence of complex and unknown triggers in our environment. The prospect of dormant ‘superhuman’ gifts is a much debated topic, and may have a whole range of implications for the future.

What is important to consider is that not all savants have developmental neurological disorders. The syndrome does sometimes emerge as a consequence of severe brain injury. Orlando Serrell is an ‘acquired savant’ who at 10 years old, was violently struck on the left hand side of his head by a baseball. Following the incident, Orlando suddenly exhibited astonishing complex calendar calculating abilities and could accurately recall the weather of every day since the accident. Orlando’s case and others alike imply the intriguing possibility that a hidden potential for astonishing skills or prodigious memory exists within all of us, expressed as a consequence of complex and unknown triggers in our environment. The prospect of dormant ‘superhuman’ gifts is a much debated topic, and may have a whole range of implications for the future.

You must be logged in to post a comment.